How Much Does One ChatGPT Message Really Cost? (Detailed Breakdown)

ChatGPT feels almost magical.

You type a question,, and within seconds you get a detailed answer, idea, code snippet, or solution.

But behind this simple interaction lies one of the most powerful (and also expensive) technology infrastructures ever built.

So… how much does one ChatGPT message really cost?

Is it free? Cheap? Or surprisingly expensive?

Let’s break it down in simple, practical terms.

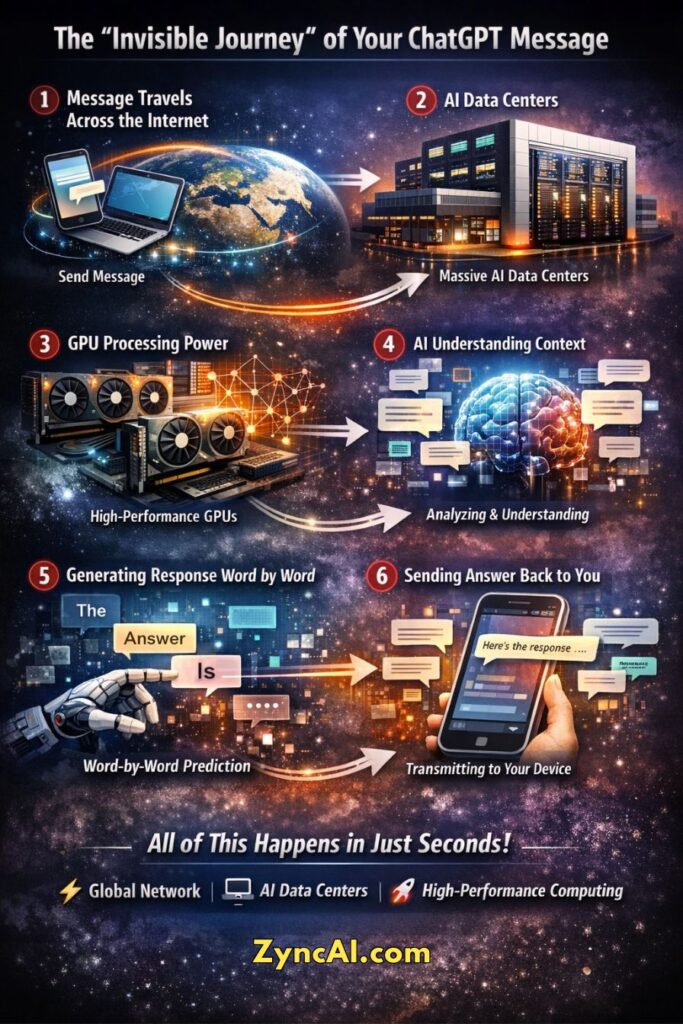

1. The “Invisible Journey” of Your ChatGPT Message

When you send a message to ChatGPT, it may feel like you are simply typing a question and getting an instant answer.

But in reality, your message goes through a sophisticated global technology pipeline, one that involves high-speed networks, powerful data centers, advanced AI models, and real-time computing decisions.

Let’s break down what really happens behind the scenes.

🌐 Step 1: Your Message Travels Across the Internet

The moment you hit send, your message begins a digital journey.

It travels from your device, whether it’s a phone, laptop, or tablet, via your local internet connection, then across multiple network routes and global servers. These routes are optimized to deliver your request to the nearest available AI infrastructure as quickly as possible.

This entire transmission usually happens in milliseconds, thanks to modern fiber-optic networks and distributed cloud systems.

🏢 Step 2: Arrival at Large-Scale AI Data Centers

Your message does not go to a single computer.

Instead, it reaches massive AI data centers, facilities that contain thousands (sometimes tens of thousands) of specialized machines designed specifically for AI workloads.

These data centers:

- Operate 24/7

- Use advanced cooling systems to manage heat

- Consume enormous amounts of electricity

- Are located strategically around the world to reduce latency

Their goal is simple: process millions of AI requests simultaneously without delays.

⚡ Step 3: High-Performance GPUs Start Processing

Once your message reaches the AI system, it is assigned to high-performance GPUs (Graphics Processing Units) or other specialized AI chips.

Unlike normal CPUs, these processors are built to handle parallel mathematical computations, which are essential for running large language models.

At this stage:

- Your message is converted into numerical representations called tokens

- Complex matrix calculations begin

- The AI system loads the relevant parts of the model into memory

- Compute resources are dynamically allocated based on demand

This step is one of the most expensive parts of the entire process.

🧠 Step 4: The AI Reads Context and Understands Intent

Before generating a response, the AI doesn’t just read your latest message.

It also analyzes:

- Previous conversation history

- Instructions or system context

- Language patterns and intent

- Possible meanings and ambiguities

This helps the model understand what you really want, not just what you typed.

For example, if you ask a follow-up question, the AI connects it with earlier messages to maintain continuity.

🔮 Step 5: Predicting the Response, Word by Word

Now comes the core AI magic.

The language model begins generating the response by predicting the most likely next word (token) based on probability.

It does this repeatedly:

- Predict next word

- Add it to the sentence

- Recalculate probabilities

- Predict the next word again

This happens hundreds or thousands of times per response.

Even a short answer may involve billions of mathematical operations behind the scenes.

📡 Step 6: Sending the Answer Back to You

Once the response is generated:

- The text is packaged and transmitted back through global networks

- Your device receives the data

- The interface displays the answer, often streamed in real time

From your perspective, it feels like the AI is “typing” to you instantly.

⏱️ All of This Happens in Just Seconds

Despite the enormous complexity, this entire invisible journey, from sending your message to receiving a response, typically takes only a few seconds.

But achieving this speed requires:

- Massive computing infrastructure

- Intelligent traffic routing

- Advanced AI optimization techniques

- Continuous system monitoring and scaling

In short, every ChatGPT message may feel simple on the surface, but behind it lies a global network of powerful machines working together in real time.

2. ChatGPT Uses Tokens, Not Words

One of the most important and often misunderstood concepts behind AI usage and pricing is tokens.

AI models like ChatGPT don’t actually read text the way humans do.

They don’t “see” full sentences, paragraphs, or ideas.

Instead, they process language as tokens, which are small pieces of text that can represent:

- A full word

- Part of a word

- A punctuation mark

- Or even a space

Understanding tokens helps you clearly see how AI usage is measured, why costs vary, and why longer conversations become more expensive.

🧩 What Exactly Is a Token?

A token is simply a unit of text that the AI can process mathematically.

Depending on the language and word complexity:

- Short common words → usually 1 token

- Long or complex words → may be split into multiple tokens

- Symbols and punctuation → can also count as tokens

For example,

| Text | Approx Tokens |

|---|---|

| Hello | 1 token |

| ChatGPT | 2–3 tokens |

| How are you today? | ~5 tokens |

| Artificial Intelligence | ~3 to 4 tokens |

| Write a 500-word blog post | ~700 tokens |

As a rough rule:

1 token ≈ 0.75 words in English

or

100 tokens ≈ 75 words

This isn’t exact, but it helps estimate usage.

🔄 Tokens Include Both Input and Output

When you use ChatGPT, the total token count is not just your question.

It includes:

✅ Prompt Tokens (Input)

Everything you type:

- Questions

- Instructions

- Copy-pasted content

- Long blog outlines

- Code snippets

Even formatting and line breaks can slightly affect token count.

✅ Completion Tokens (Output)

Everything the AI generates:

- Answers

- Explanations

- Lists

- Code

- Long blog posts

So if you write a 100-token prompt and receive a 600-token response,

your total usage becomes 700 tokens.

🧠 Conversation Memory Also Uses Tokens

This is where many users don’t realize how token usage grows.

ChatGPT often re-reads previous conversation messages to understand context.

For example:

- Message 1 → 150 tokens

- Message 2 → 200 tokens

- Message 3 → 250 tokens

When you send Message 4, the AI may process:

150 + 200 + 250 + new message tokens

This means long conversations become progressively heavier in token usage.

That’s why:

- Long chats feel slightly slower

- Complex threads cost more compute

- Resetting or starting a new chat can reduce token load

⚙️ Why Tokens Matter for AI Pricing

AI infrastructure costs are largely tied to how many tokens are processed.

More tokens mean:

- More GPU computation

- More memory usage

- Longer processing time

- Higher electricity consumption

- Greater infrastructure load

This is why AI platforms price usage based on tokens instead of “per message” or “per word.”

A short question with a long answer may cost more than many small interactions.

📊 Tokens Add Up Faster Than You Think

Let’s look at a realistic scenario:

- You paste a 1,500-word article → ~2,000 tokens

- Ask for improvements → 50 tokens

- AI gives suggestions → 600 tokens

Total in one interaction:

👉 ~2,650 tokens processed

Multiply this by millions of users globally, and you can see why running AI systems requires massive computing power.

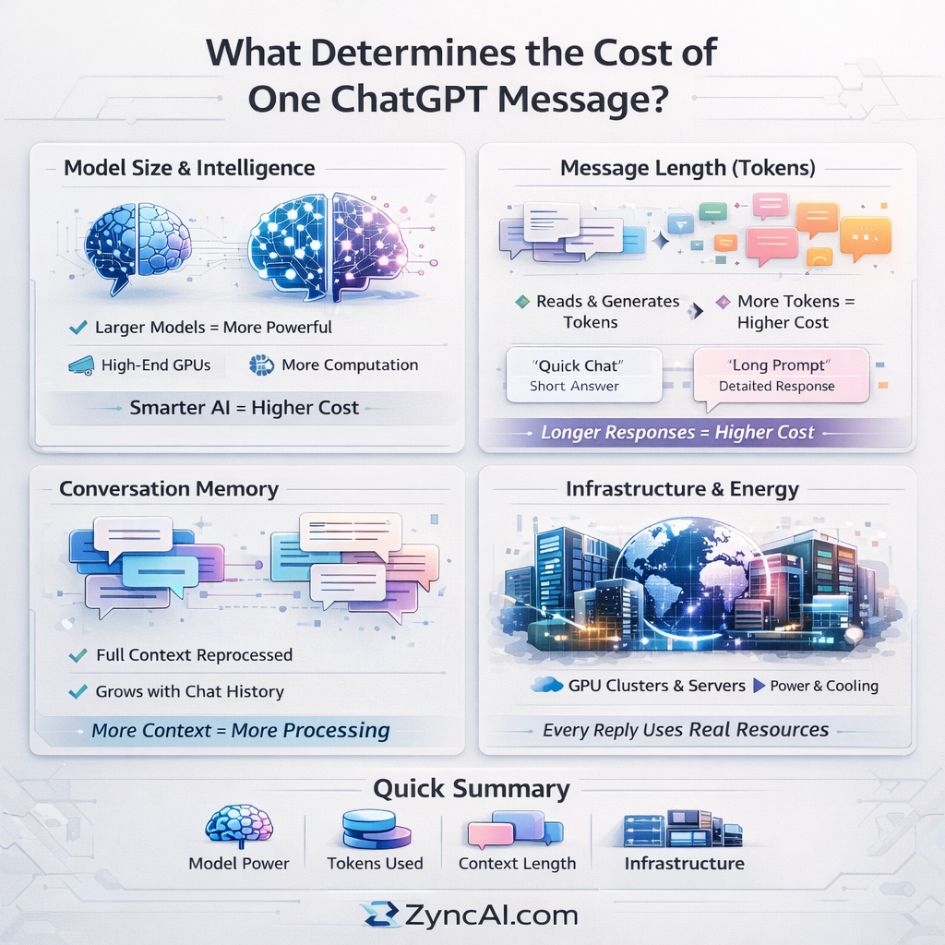

3. Main Factors That Decide the Cost of One ChatGPT Message

Understanding the real cost of a single ChatGPT message requires looking beyond just “typing a prompt and getting a reply”. Each interaction triggers a chain of computational events across powerful infrastructure. Let’s break down the key factors in depth.

3.1. Model Size and Intelligence Level

Not all AI models are created equal.

Large scale models (like advanced GPT systems) are built with billions or even trillions of parameters. These parameters act like the “brain cells” of the AI, allowing it to:

- Understand complex instructions

- Generate human-like responses

- Perform reasoning, coding, and analysis

But this intelligence comes at a cost.

Why larger models are expensive:

- Require high-end GPUs (like NVIDIA A100/H100 class)

- Need significantly more VRAM (memory) per request

- Perform billions of mathematical operations per second

- Often run across multiple GPUs simultaneously

👉 The more intelligent the model, the more computation is required per message.

Trade-off:

- Larger models → Better accuracy, creativity, reasoning

- Smaller models → Faster, cheaper, but less capable

This is why many platforms offer multiple model tiers — balancing cost vs performance depending on the use case.

3.2. Message Length (Token Usage)

AI doesn’t process text the way humans do. Instead, everything is broken into tokens — small chunks of words.

For example:

- “Hello” → ~1 token

- “How are you today?” → ~5 tokens

- 1,000 words → ~1,300–1,500 tokens

Each token:

- Needs to be read (input tokens)

- Needs to be generated (output tokens)

👉 You are effectively paying for both sides of the conversation.

Why longer messages cost more:

- More tokens = more computation

- Longer responses = more generation time

- Complex prompts = deeper reasoning chains

Hidden factor: Output size

Even if your prompt is short, a long answer increases cost significantly.

Example:

- Prompt: “Explain AI” → short answer → low cost

- Prompt: “Write a 2000-word blog post on AI trends” → high cost

👉 Output tokens often cost more than input tokens in many pricing models.

3.3. Conversation Memory (Context Window)

One of ChatGPT’s most powerful features is its ability to remember context within a conversation.

But this convenience has a hidden cost.

Each time you send a new message, the model may process:

- Your latest prompt

- Previous user messages

- Previous AI responses

- System-level instructions

👉 This entire “context window” is reprocessed every time.

Why this increases cost:

- More tokens accumulate over time

- The model doesn’t “remember” cheaply, it re-reads everything

- Longer chats = exponential token growth

Example:

- Message 1 → 100 tokens

- Message 5 → 500+ tokens processed

- Message 20 → thousands of tokens processed per reply

👉 Even a short new question can become expensive inside a long conversation.

Optimization tip (for readers):

- Start a new chat for new topics

- Avoid unnecessary long threads

- Summarize context instead of repeating full history

3.4. Infrastructure and Energy Usage

Behind every ChatGPT response is a massive global system, far beyond a single server.

What actually powers one response:

- High-performance GPU clusters

- Distributed data centers

- Ultra-fast networking systems

- AI inference engines optimized for latency

- Cooling systems to prevent overheating

These systems are run by organizations like OpenAI and cloud providers such as Microsoft Azure.

Major cost components:

- ⚡ Electricity – GPUs consume enormous power

- ❄️ Cooling systems – Prevent overheating in dense server racks

- 🧑🔧 Engineering teams – Maintain uptime, optimize performance

- 🌐 Networking – Deliver responses globally in milliseconds

- 🔁 Hardware depreciation – GPUs cost thousands of dollars each

👉 Training models costs millions, but running them (inference) daily is also extremely expensive.

Important insight:

Even a “simple” response:

- Activates powerful hardware

- Uses shared global infrastructure

- Competes for resources with millions of other users

4. Estimated Cost of One ChatGPT Message (Realistic Range)

When people ask “How much does one ChatGPT message cost?”, the honest answer is: it depends heavily on what you ask and how the AI responds.

There isn’t a single fixed price per message. Instead, costs are influenced by:

- Number of tokens (input + output)

- Model complexity (basic vs advanced reasoning models)

- Response length and depth

- Context (conversation history)

👉 That’s why we talk about realistic ranges, not exact numbers.

💡 How to Interpret These Cost Ranges

Think of it like this:

- A short factual question is like a quick Google search → minimal compute

- A detailed explanation is like asking an expert to write a paragraph → moderate compute

- A long technical output is like hiring a specialist to write a report → high compute

Even though these costs are tiny per message, they scale massively across millions of users.

📊 Expanded Cost Estimates Table

| Message Type | Example Prompt | Estimated Cost |

|---|---|---|

| Short factual query | “What is GDP?” | $0.001 – $0.005 |

| Very short chat reply | “Yes / No / Thanks” | $0.0005 – $0.002 |

| Basic explanation | “Explain inflation in simple terms” | $0.003 – $0.01 |

| Medium explanation | “Explain how blockchain works” | $0.005 – $0.02 |

| Long detailed answer | “Write a 1000-word article on AI trends” | $0.02 – $0.08 |

| Long technical response | “Explain distributed systems with examples and architecture” | $0.02 – $0.10+ |

| Code generation (simple) | “Write a Python function to sort a list” | $0.005 – $0.02 |

| Code generation (complex) | “Build a full-stack app with API and database schema” | $0.03 – $0.15+ |

| Data analysis request | “Analyze this dataset and summarize insights” | $0.02 – $0.12+ |

| Creative writing | “Write a 2000-word कहानी / story” | $0.02 – $0.10 |

| SEO blog content | “Write a blog post with headings, keywords, and meta description” | $0.03 – $0.12 |

| Multi-step reasoning | “Compare economic systems with pros/cons and future predictions” | $0.02 – $0.10+ |

| Long conversation follow-up | “Based on everything we discussed, summarize key insights” | $0.03 – $0.15+ |

| Image prompt generation | “Generate 10 detailed MidJourney prompts” | $0.01 – $0.05 |

| Translation (short) | “Translate this sentence to French” | $0.001 – $0.005 |

| Translation (long) | “Translate a 2000-word document” | $0.02 – $0.08 |

| Summarization (short) | “Summarize this paragraph” | $0.002 – $0.008 |

| Summarization (long doc) | “Summarize a research paper” | $0.02 – $0.10+ |

⚠️ Why Costs Can Go Higher Than Expected

Some requests quietly increase cost more than users realize:

1. Large Outputs

- Asking for long blogs, reports, or scripts increases output tokens significantly

- Output tokens are often the biggest cost driver

2. Deep Reasoning Tasks

- Complex prompts require multi-step thinking

- More internal computation = higher cost

3. Long Conversations

- The model reprocesses previous context

- Costs increase with every additional message

4. Advanced Models

- Premium models cost more per token

- But they deliver higher accuracy and better results

⚙️ Why Real-World Costs Are Often Lower

Even though the ranges above are realistic, actual costs are often optimized thanks to:

- Efficient model architectures

- Token compression techniques

- Smart caching of repeated queries

- Hardware acceleration (GPUs, TPUs)

- Infrastructure optimizations by providers like OpenAI

👉 This is why users can access ChatGPT at low subscription prices or even free tiers.

6. Why ChatGPT Feels “Free” or Cheap to Users

Most users access ChatGPT via,

- Subscription plans

- Free tiers

- Bundled enterprise pricing

This structure removes the need to pay per individual message, making usage feel unlimited and cost-free. Instead of thinking about each query as a transaction, users experience a smooth, all-inclusive system where costs are hidden behind monthly pricing or completely absorbed in free plans.

AI companies often,

- Optimize models for efficiency

- Use scale advantages

- Subsidize usage to grow adoption

They reduce costs by improving model performance, routing simpler queries to lighter systems, and spreading infrastructure expenses across millions of users. At the same time, subsidizing free usage helps attract new users, increase engagement, and convert them into long-term paying customers.

So while one message has a cost, users don’t directly pay per query in most cases.

Instead, the cost is abstracted, distributed, and strategically managed, which is why ChatGPT feels “free” or very cheap from a user’s perspective—even though significant resources are being used behind the scenes.

7. Cost at Massive Scale – The Real AI Economics

Now imagine,

- Millions of users

- Billions of messages daily

At this level, even a tiny cost per interaction multiplies rapidly. What seems like just a fraction of a cent per message becomes a massive financial commitment when scaled across global usage. Every prompt processed requires compute power, memory, networking, and energy repeated billions of times each day across distributed data centers worldwide.

Even if one message costs just $0.01, total daily operational cost could reach:

👉 Tens of millions of dollars

And in reality, costs can vary depending on model complexity, response length, and peak demand periods, sometimes pushing infrastructure to its limits. This is why efficiency is not just important, it’s critical for survival in the AI industry.

This is why major AI companies invest heavily in,

- Custom AI chips

- Renewable energy data centers

- Model compression techniques

- Inference optimization

Specialized hardware like AI accelerators significantly reduces the cost per computation, while renewable energy helps offset the enormous electricity consumption required to run large-scale systems. Model compression and optimization techniques make AI models smaller and faster, allowing them to serve more users with fewer resources and lower latency.

Scale changes everything.

At small volumes, AI costs feel negligible—but at global scale, they define the entire business model. The companies that succeed are those that can continuously reduce cost per message while maintaining performance, making large-scale AI both sustainable and profitable.

8. How Users and Businesses Can Reduce AI Costs

Whether you are using AI personally or via APIs, smart usage can reduce costs significantly.

Practical Tips

- Write clear and concise prompts

- Avoid unnecessarily long conversations

- Request shorter responses when possible

- Use smaller AI models for simple tasks

- Batch similar requests together

- Cache or reuse AI outputs

For businesses using AI at scale, prompt optimization alone can reduce costs 20% to 60%.

9. Will ChatGPT Become Cheaper in the Future?

Most likely, yes.

Several powerful trends are already pushing AI costs downward, making each interaction more affordable over time. As technology matures, companies are finding smarter ways to deliver the same (or even better) results using fewer resources.

Several trends are already reducing AI costs,

- More efficient AI architectures

- Better GPU / AI chip performance

- Model distillation and compression

- Increased competition

- Open-source innovation

- Renewable energy integration

New model designs require fewer computations while maintaining high quality, and modern AI chips are becoming significantly faster and more energy-efficient. Techniques like distillation and compression allow large models to be transformed into smaller, cheaper versions without losing much capability. At the same time, growing competition among AI providers is driving pricing down, while open-source models are accelerating innovation and lowering entry barriers. Renewable energy adoption also helps reduce long-term operational costs for massive data centers.

Just like cloud computing and internet bandwidth became cheaper over time,

AI inference cost is expected to decline steadily.

As adoption increases and infrastructure improves, the cost per message will likely continue to drop, making AI more accessible to individuals, businesses, and developers worldwide while enabling entirely new use cases that were previously too expensive to scale.

FAQs

Q1. What is the average cost of one ChatGPT message?

A1. The average cost ranges from $0.001 to $0.10+ per message, depending on model complexity, token usage, and response length.

Q2. Why does ChatGPT pricing depend on tokens instead of words?

A2. AI models process text as tokens (smaller chunks of words), making token-based pricing more accurate for measuring computational workload.

Q3. How many tokens are typically used in one ChatGPT interaction?

A3. A typical interaction uses 50 to 1,000+ tokens, depending on prompt length, response size, and conversation history.

Q4. Do longer conversations increase the cost per message?

A4. Yes. ChatGPT often reprocesses previous messages, so longer conversations increase total token usage and cost.

Q5. What is the difference between prompt tokens and completion tokens?

A5. Prompt tokens are your input text, while completion tokens are the AI-generated response. Both contribute to total cost.

Q6. Why do advanced AI models cost more per message?

A6. Advanced models require more computational power, memory, and processing steps, increasing infrastructure and energy costs.

Q7. Does response length directly affect ChatGPT pricing?

A7. Yes. Longer responses use more tokens, which increases the overall cost of the message.

Q8. Is ChatGPT free to use in reality?

A8. No. Each message has a real cost, but users often access it through subscriptions or subsidized plans.

Q9. How does GPU usage impact ChatGPT costs?

A9. GPUs handle AI computations, and their high cost, energy consumption, and maintenance significantly influence per-message pricing.

Q10. Do different ChatGPT models have different pricing?

A10. Yes. Smaller models are cheaper, while larger, more capable models cost more per token.

Q11. How does context window size affect cost?

A11. Larger context windows process more previous messages, increasing token usage and overall cost.

Q12. Can optimizing prompts reduce AI costs?

A12. Yes. Efficient prompts reduce unnecessary tokens, lowering total cost significantly.

Q13. What is AI inference cost?

A13. It is the cost of running an AI model to generate outputs based on user input in real time.

Q14. Why is AI inference expensive?

A14. It requires powerful hardware, high electricity consumption, and real-time processing at scale.

Q15. How does electricity consumption affect ChatGPT pricing?

A15. Data centers consume large amounts of electricity for computation and cooling, contributing to operational costs.

Q16. Do all ChatGPT messages cost the same?

A16. No. Costs vary based on length, complexity, model used, and context size.

Q17. How does scaling affect AI costs?

A17. At scale, even small per-message costs multiply into millions of dollars in operational expenses.

Q18. What is the cheapest type of ChatGPT query?

A18. Short, factual queries with minimal context are the cheapest.

Q19. What is the most expensive type of ChatGPT query?

A19. Long, complex tasks requiring deep reasoning and large outputs are the most expensive.

Q20. Does streaming responses change the cost?

A20. No. Streaming affects delivery speed, not the total token-based cost.

Q21. How do AI companies reduce inference costs?

A21. Through model optimization, hardware efficiency, caching, and distributed computing.

Q22. What is model distillation in AI cost reduction?

A22. It is the process of creating smaller models that replicate larger ones, reducing computation costs.

Q23. Does API usage cost more than ChatGPT subscriptions?

A23. Yes. API pricing is typically pay-per-token, while subscriptions bundle usage into fixed plans.

Q24. How do businesses manage large-scale AI costs?

A24. By optimizing prompts, selecting appropriate models, and limiting unnecessary token usage.

Q25. Can caching responses reduce AI costs?

A25. Yes. Reusing previous outputs avoids repeated computation.

Q26. What role do data centers play in AI cost?

A26. Data centers host the hardware and infrastructure required to run AI models, contributing heavily to costs.

Q27. How does latency relate to cost in AI systems?

A27. Lower latency often requires more powerful hardware, which can increase costs.

Q28. Are open-source AI models cheaper to run?

A28. They can be, but still require infrastructure and operational resources.

Q29. How does batching requests reduce costs?

A29. It improves efficiency by processing multiple requests together, reducing overhead.

Q30. What is the impact of token limits on pricing?

A30. Higher token limits allow longer interactions but increase potential costs.

Q31. How do AI chips improve cost efficiency?

A31. Specialized chips are optimized for AI workloads, reducing energy use and computation cost.

Q32. Does fine-tuning a model increase or reduce costs?

A32. It may increase initial cost but reduce long-term inference costs by improving efficiency.

Q33. Why is AI cheaper at scale?

A33. Economies of scale reduce per-unit cost through optimization and infrastructure sharing.

Q34. How does compression help reduce AI costs?

A34. It reduces model size and computation requirements, lowering resource usage.

Q35. Can limiting response length save money?

A35. Yes. Fewer tokens in the output directly reduce costs.

Q36. How does competition impact AI pricing?

A36. Increased competition drives innovation and lowers costs for users.

Q37. Will AI costs decrease in the future?

A37. Yes. Advances in hardware, software, and competition are expected to reduce costs over time.

Q38. How does renewable energy affect AI costs?

A38. It can reduce long-term operational costs of data centers.

Q39. Is AI cost predictable per message?

A39. Not exactly. It varies based on token usage and model selection.

Q40. Why should users care about AI message cost?

A40. Understanding costs helps optimize usage, reduce expenses, and use AI more efficiently.