What Is Training a Model in AI? (Beginner to Advanced Guide)

Artificial Intelligence may sound complex, futuristic, or even mysterious. But at its core, AI works because of one essential process called model training. If you understand what training a model means, you understand the foundation of modern AI itself. Every smart system you interact with today, ranging from voice assistants to recommendation engines exists because a model has been trained using data.

1. Simple Explanation of AI Model Training

Training a model in AI simply means teaching a computer system to learn patterns from data so it can make decisions or predictions on its own.

Instead of writing thousands of strict rules manually, developers give an AI model large amounts of examples. The model studies those examples, detects patterns, adjusts its internal parameters, and gradually improves its performance.

Think of it like learning through experience,

- A child learns to recognize dogs after seeing many dogs.

- A musician improves by practicing repeatedly.

- A student becomes better at math by solving many problems.

AI models learn in a very similar way. It is by analyzing data again and again until they become accurate.

In technical terms, training involves following,

- Feeding data into an algorithm

- Measuring prediction errors

- Adjusting internal weights

- Repeating the process until performance improves

The result is a trained AI model capable of handling new situations it has never seen before.

Why “Training” Is the Core of Artificial Intelligence

Training is the heart of AI because artificial intelligence does not truly “think” or “understand” like humans. Instead, AI becomes intelligent through learning patterns from data.

Without training,

- An AI model is just empty code.

- It cannot recognize images.

- It cannot understand language.

- It cannot make recommendations.

Training transforms a basic algorithm into a smart system !!

Following is exactly what separates traditional software from AI,

| Traditional Software | Artificial Intelligence |

|---|---|

| Rules are manually programmed | System learns rules automatically |

| Fixed behavior | Improves with data |

| No learning ability | Learns from experience |

Every major AI breakthrough, starting from self-driving technology to generative AI is there because models were trained on massive datasets and continuously refined.

What Is an AI Model?

Before understanding how training works, you first need to understand what exactly is being trained. An AI model is the core engine behind any intelligent system. It is the structure that learns from data, identifies patterns, and makes decisions or predictions.

When people say “the AI learned this,” what they really mean is that the model adjusted its internal mathematical structure based on data.

Let’s break that down in simple terms.

An AI model is a mathematical function designed to recognize patterns in data and produce useful outputs.

At its core, every AI model:

- Takes input data

- Processes that data using learned patterns

- Produces an output

You can think of it like this:

Input → Processing → Output

Let’s look at simple examples:

- Input: An image of a cat

Processing: The model analyzes shapes, textures, and patterns

Output: “This is a cat” - Input: An email message

Processing: The model checks keywords, structure, and sender data

Output: “Spam” or “Not Spam” - Input: A search query

Processing: The model matches intent and relevance

Output: Ranked search results

Behind the scenes, the “processing” part is powered by mathematical equations that adjust based on training data. These equations contain parameters (often called weights) that change during training to improve accuracy.

Without training, the model’s outputs would be random. With training, the model becomes increasingly accurate over time.

In short:

👉 An AI model is a system of mathematical rules that learns from examples to make predictions about new data.

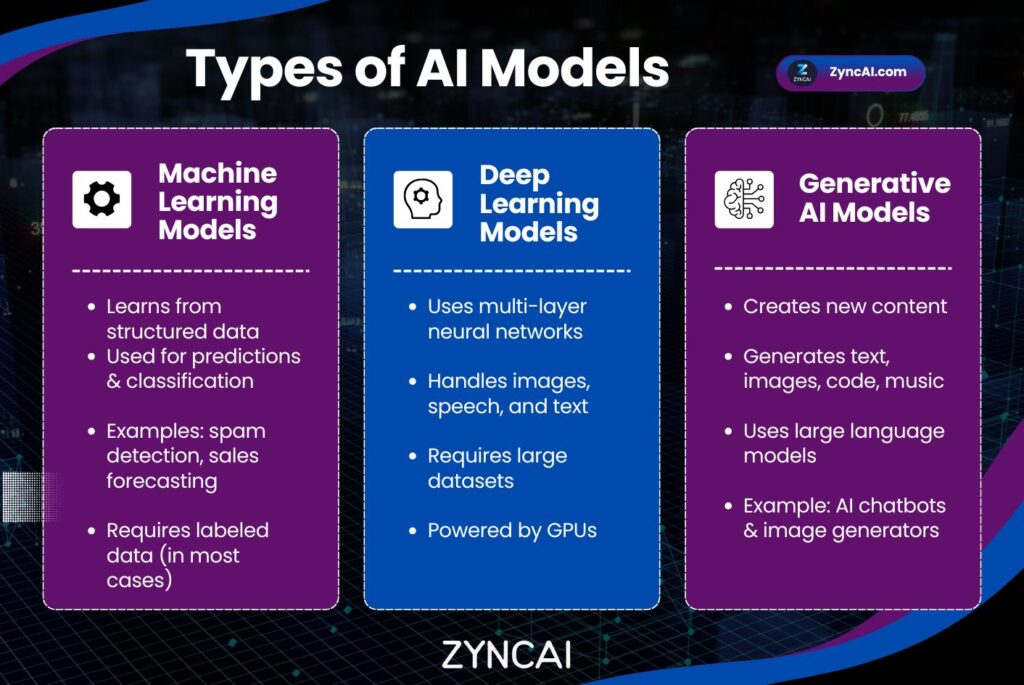

2.2 Types of AI Models

Not all AI models are the same. They vary in complexity, purpose, and architecture. Below are the main categories you will encounter.

- Machine Learning Models

- Deep Learning Models

- Generative AI Models

2.1 Machine Learning Models

Machine Learning (ML) models are the foundation of most traditional AI systems.

They are typically used for things like,

- Classification (spam detection, fraud detection)

- Regression (price prediction)

- Clustering (customer segmentation)

Examples of ML algorithms includes following,

- Linear regression

- Decision trees

- Random forests

- Support vector machines

These models are powerful for structured data (like spreadsheets and databases) and are often faster and less resource intensive than deep learning models.

Machine learning models work well when,

- The dataset is moderate in size

- The problem is clearly defined

- Interpretability is important

2.2 Deep Learning Models

Deep learning models are a more advanced type of machine learning model. They use neural networks with multiple layers to analyze complex data such as images, audio, and natural language.

These models are inspired by the structure of the human brain.

Deep learning is commonly used for areas like,

- Image recognition

- Speech recognition

- Language translation

- Autonomous driving

Deep learning models usually require following,

- Large datasets

- Significant computational power (often GPUs)

- Longer training time

They are particularly effective at handling unstructured data like photos, videos, and text.

2.3 Generative AI Models

Generative AI models go one step further. Instead of just predicting or classifying, they create new content.

These models can generate things like,

- Text

- Images

- Music

- Code

- Video

They are trained on massive datasets and learn patterns in such depth that they can produce original outputs based on prompts.

Following are some examples of Generative AI models,

- Large language models (LLMs)

- Image generation models

- Audio synthesis systems

Generative models are currently driving many of the most exciting AI innovations.

Real-World Examples of AI Models

AI models are not theoretical concepts, they power some of the largest technology platforms in the world.

Here are three major examples,

1) OpenAI

OpenAI develops large language models such as GPT (Generative Pre-trained Transformer). These models are trained on vast amounts of text data to understand and generate human-like language.

Products like ChatGPT use these trained models to,

- Answer questions

- Write content

- Summarize information

- Generate code

The model itself is a deep neural network trained on enormous datasets and refined using human feedback.

2) Google – Search & AI Systems

Google uses AI models across nearly all of its services.

In search, AI models usually does following,

- Understand query intent

- Rank relevant pages

- Detect spam

- Provide direct answers

Beyond search, Google uses AI in,

- Gmail spam detection

- Google Photos image recognition

- Google Maps route optimization

Each of these systems runs on trained AI models designed for specific tasks.

3) Netflix – Recommendation Algorithms

Netflix uses AI models to personalize your homepage.

Its recommendation system does,

- Analyzes viewing history

- Tracks watch time and preferences

- Compares behavior across users

Based on this training data, the model predicts which movies or shows you are most likely to watch next.

Without AI models, Netflix would not be able to personalize content at scale.

4) Amazon – Product Recommendations & Dynamic Pricing

Amazon uses AI models extensively across its platform.

Its recommendation engine analyzes,

- Products you view

- Items you purchase

- Search history

- What similar customers buy

Based on this data, AI models predict which products you are most likely to purchase next. That’s why the “Customers also bought” section often feels surprisingly accurate.

Amazon also uses AI models for,

- Dynamic pricing

- Inventory forecasting

- Fraud detection

- Warehouse robotics optimization

All of this works because trained machine learning models continuously analyze customer behavior and adjust predictions in real time.

5) Tesla – Self-Driving & Autopilot Systems

Tesla’s Autopilot system relies heavily on deep learning models trained on massive amounts of driving data.

The AI model processes,

- Camera inputs

- Road signs

- Lane markings

- Pedestrians

- Traffic patterns

The system uses neural networks trained on millions of miles of driving footage to make decisions such as,

- Steering adjustments

- Speed control

- Obstacle avoidance

Without continuous model training, autonomous driving would not be possible. The more real-world data the system receives, the more refined and accurate the model becomes.

6) Spotify – Personalized Music Recommendations

Spotify uses AI models to curate playlists like,

- Discover Weekly

- Daily Mix

- Release Radar

Its models analyze,

- Songs you skip

- Songs you replay

- Genres you prefer

- Listening time patterns

The AI learns your musical taste over time and predicts what tracks you will enjoy next.

Spotify’s recommendation system combines:

- Machine learning

- Natural language processing (for analyzing song metadata and reviews)

- Collaborative filtering

This creates a highly personalized experience for millions of users worldwide.

3. What Is Training in AI?

An AI model does not start smart. It becomes useful only after going through a process called training.

Training is the stage where raw algorithms transform into systems capable of recognizing patterns, making predictions, and solving real-world problems.

Training in AI is the process of teaching a model using data so it can make accurate predictions or decisions without being explicitly programmed for every situation.

Instead of writing detailed rules like:

- “If email contains this word → mark as spam”

- “If image has these pixels → detect a cat”

developers provide large datasets containing examples, and the model learns the rules automatically.

Below is how training works at a high level:

- The model receives input data

- It makes a prediction

- The system compares the prediction with the correct answer

- The model measures how wrong it was (called error or loss)

- Internal parameters adjust slightly to reduce mistakes

- The process repeats thousands or millions of times

Over time, the model improves its ability to produce correct outputs.

For example:

- A spam detection model learns from labeled emails

- A face recognition system learns from images

- A language model learns from text conversations

Training allows AI systems to learn patterns instead of memorizing instructions.

3.1 Human Learning vs AI Training

AI training becomes much easier to understand when compared to human learning.

Like Teaching a Child Using Examples

Imagine teaching a child to recognize animals.

You do not explain complex biological definitions. Instead, you show examples like below,

- “This is a dog.”

- “This is also a dog.”

- “This is a cat.”

After seeing many examples, the child naturally learns distinguishing features, like fur shape, ears, size, movement. Eventually child identifies animals correctly on their own.

AI models learn in the same way as above.

- Data = examples shown to the model

- Training = repeated exposure

- Learning = recognizing patterns

Remember that the more quality examples provided, the better the learning outcome.

Like Training a Dog With Rewards

Another helpful analogy is dog training.

When training a dog:

- Correct behavior → reward

- Incorrect behavior → no reward or correction

- Repetition strengthens learning

This mirrors a major AI training approach called reinforcement learning.

AI models receive feedback during training:

- Correct predictions reduce error (reward)

- Incorrect predictions increase error (penalty)

Over time, the model learns behaviors that maximize success—just like a trained pet learning commands through rewards.

3.2 Why Training Is Important

Training is what transforms AI from simple software into intelligent systems. Even the most advanced AI architecture would produce random or meaningless results without proper training.

Here is why training matters.

1) Improves Accuracy

Each training cycle helps the model refine its understanding.

Early in training,

- Predictions are often incorrect.

- The model guesses randomly.

After sufficient training,

- Predictions become consistent.

- Accuracy improves dramatically.

For example, an image recognition model may initially identify objects incorrectly, but after training on thousands of images, it can achieve human-level recognition accuracy.

2) Reduces Errors

Training works by continuously minimizing mistakes.

Every incorrect prediction teaches the model something new,

- Which patterns matter

- Which signals to ignore

- How to adjust internal parameters

This process of error reduction is fundamental to machine learning. The model gradually learns the difference between noise and meaningful information.

The results are,

- Better performance

- Fewer false predictions

- More reliable AI systems

3) Makes AI Adaptable

One of the greatest strengths of AI is adaptability.

Traditional software cannot easily adjust to new situations without manual updates. AI models, however, can be retrained or fine-tuned with new data.

This allows AI systems to,

- Adapt to changing user behavior

- Learn new languages or trends

- Improve recommendations over time

- Handle previously unseen scenarios

For example,

- Recommendation engines evolve as user preferences change.

- Fraud detection models adapt to new scam techniques.

- Language models learn emerging vocabulary and topics.

Training enables AI to grow smarter as the world changes.

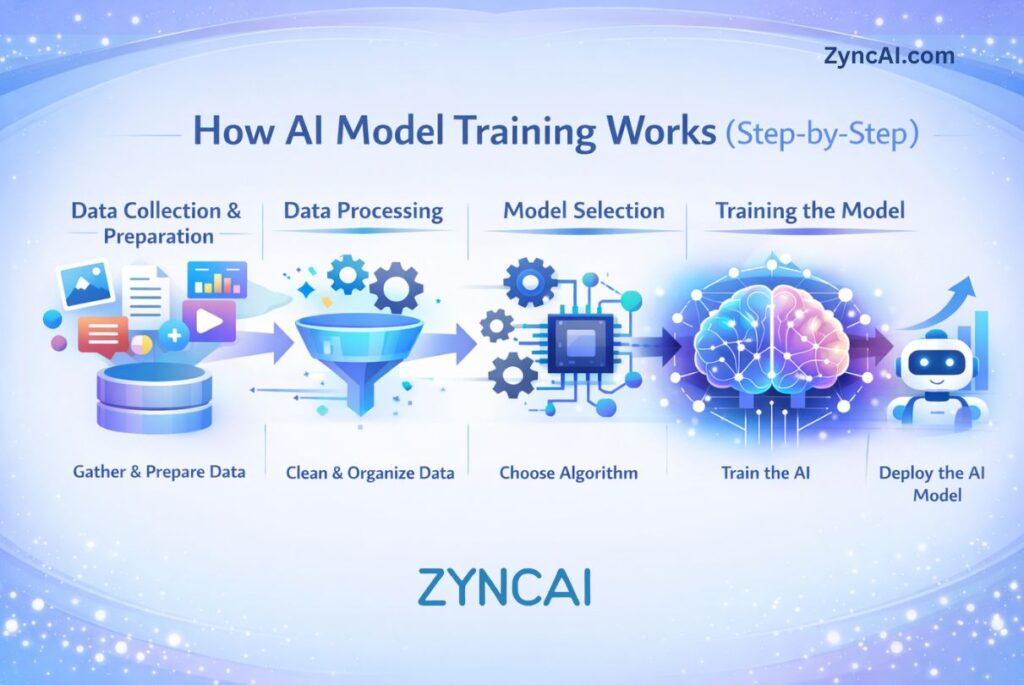

4. How AI Model Training Works (Step-by-Step)

Understanding AI becomes much easier when you break the training process into clear stages. The core training workflow follows the same fundamental steps and also remember that the advanced systems may involve massive infrastructure and billions of parameters.

From collecting data to evaluating performance, every AI model goes through a structured pipeline, as per the steps given below,

Step 1: Data Collection

Every AI model begins with data. Data is the raw material that allows a model to learn patterns.

Without data, there is no training.

There are two main types of data:

Type 01 – Structured Data

- Organized in tables or spreadsheets

- Rows and columns

- Examples: sales records, customer databases, financial transactions

Structured data is easier to analyze and commonly used in business applications.

Type 02 – Unstructured Data

- Not organized in fixed formats

- Includes text, images, audio, and video

- Examples: social media posts, photos, emails, voice recordings

Unstructured data is more complex but powers many modern AI systems like chatbots and image recognition tools.

Importance of Quality Data

Data quality directly impacts model performance.

Poor data can lead to,

- Biased predictions

- Inaccurate outputs

- Overfitting

- Weak generalization

High-quality data should be,

- Accurate

- Relevant

- Diverse

- Properly labeled (for supervised learning)

In AI, there is a common phrase:

“Garbage in, garbage out.”

Even the most advanced algorithm cannot compensate for poor-quality data.

Step 2: Data Preprocessing

Raw data is rarely ready for training. It usually contains errors, inconsistencies, or irrelevant information.

Data preprocessing prepares the dataset for effective learning.

1) Cleaning

This step removes or fixes,

- Missing values

- Duplicate entries

- Incorrect labels

- Outliers

For example,

- Fixing incomplete customer records

- Removing corrupted images

- Correcting mislabeled spam emails

Clean data improves training stability and reliability.

2) Normalization

Different features may have different scales.

For example:

- Age: 25

- Income: 50,000

- Rating: 4.5

If left unscaled, larger numbers (like income) may dominate the learning process.

Normalization ensures that,

- All input features are on a similar scale

- The model learns fairly from each feature

This improves convergence and performance.

3) Feature Engineering

Feature engineering involves selecting or creating meaningful inputs for the model.

For example,

- Extracting keywords from text

- Calculating customer lifetime value

- Converting timestamps into time-of-day categories

Good feature engineering can significantly improve model accuracy without changing the algorithm itself.

In many cases, this step is where domain expertise adds the most value.

Step 3: Choosing an Algorithm

Once data is ready, the next step is selecting the appropriate algorithm.

Different problems require different approaches.

Linear Regression

Used for predicting numerical values.

Example:

- Predicting house prices

- Forecasting sales revenue

It works by finding a line (or equation) that best fits the data.

Best for:

- Simple relationships

- Structured data

Decision Trees

Used for classification or regression tasks.

Example:

- Spam detection

- Loan approval decisions

Decision trees split data into branches based on conditions (like yes/no questions).

They are:

- Easy to interpret

- Good for structured datasets

Neural Networks

Used for complex pattern recognition.

Example:

- Image recognition

- Speech recognition

- Language modeling

Neural networks consist of multiple layers that process information in stages.

They are:

- Powerful

- Flexible

- Data-intensive

- Computationally demanding

The algorithm you choose depends on:

- Problem type

- Dataset size

- Available computing resources

- Required accuracy

Step 4: Training Process

This is where actual learning happens.

The prepared data is fed into the chosen algorithm, and the model begins adjusting its internal parameters.

Feeding Data Into the Model

The model receives input data in batches.

For each batch,

- It makes predictions

- Compares predictions to actual results

The difference between prediction and truth is called error or loss.

Adjusting Parameters

The model contains internal weights (parameters).

During training:

- The system calculates how wrong the prediction was

- It adjusts weights slightly to reduce error

- This process repeats many times

This optimization process often uses a method called gradient descent, which gradually moves the model toward lower error.

Minimizing Error

The goal of training is simple:

Reduce prediction error as much as possible.

Training continues for multiple cycles (called epochs) until:

- Error stabilizes

- Accuracy improves

- Performance reaches acceptable levels

At this stage, the model has learned meaningful patterns from the data.

Step 5: Evaluation

Training alone is not enough. A model must be tested on new data to ensure it truly learned patterns, not just memorized the training set.

Training vs Testing Data

The dataset is usually split into:

- Training data – Used to teach the model

- Testing data – Used to evaluate performance

If a model performs well on training data but poorly on testing data, it may be overfitting (memorizing instead of learning).

The goal is strong performance on unseen data.

Accuracy Metrics

Performance is measured using metrics depending on the task.

For classification tasks:

- Accuracy

- Precision

- Recall

- F1-score

For regression tasks:

- Mean squared error (MSE)

- Root mean squared error (RMSE)

- R-squared

These metrics help determine:

- How reliable the model is

- Whether improvements are needed

- If deployment is ready

Summary of the Steps

The AI training process follows a logical progression,

- Collect data

- Clean and prepare data

- Choose the right algorithm

- Train by minimizing errors

- Evaluate performance

Large-scale AI systems may involve billions of data points and powerful computing clusters, but the core principles remain the same.

Understanding this workflow gives you a strong foundation for mastering artificial intelligence.

5. Types of AI Training

Not all AI models learn in the same way. Different training approaches are used depending on the type of data available and the goal of the system. Understanding these training types helps you see how AI systems learn across industries, ranging from fraud detection to robotics to large language models.

The four most common types of AI training are,

- Supervised Learning

- Unsupervised Learning

- Reinforcement Learning

- Self-Supervised Learning

Let’s explore each type in simple terms.

5.1 Supervised Learning

Supervised learning is the most common and widely used type of AI training.

In supervised learning, the model is trained using labeled data. This means every input example comes with a correct output (answer).

Think of it like learning with a teacher who provides both questions and solutions.

Labeled Data

Each training example includes,

- Input data

- Correct output label

For example,

| Email Text | Label |

|---|---|

| “Win a free iPhone now!” | Spam |

| “Meeting scheduled at 3 PM” | Not Spam |

The model learns by comparing,

- Its prediction

- The actual label

It adjusts itself until it can correctly predict labels for new, unseen data.

Example: Spam Detection

Spam detection is a classic supervised learning task.

The process:

- Collect thousands (or millions) of emails.

- Label them as “spam” or “not spam.”

- Train the model on this labeled dataset.

- Test the model on new emails.

Over time, the model learns patterns such as:

- Suspicious keywords

- Unusual formatting

- Certain sender behaviors

Supervised learning is commonly used for:

- Image classification

- Credit risk assessment

- Medical diagnosis

- Sentiment analysis

It works best when large amounts of labeled data are available.

5.2 Unsupervised Learning

Unsupervised learning is different because it uses unlabeled data.

There are no predefined answers.

Instead of being told what is correct, the model tries to discover hidden patterns or structures within the data on its own.

No Labels

In unsupervised learning,

- Inputs are provided.

- No correct outputs are given.

- The model identifies patterns, similarities, or groupings.

For example,

- Customer purchasing data without categories.

- Website user behavior logs without predefined segments.

The model analyzes the data and finds structure automatically.

Clustering

One of the most common unsupervised techniques is clustering.

Clustering groups similar data points together.

Example:

An e-commerce company wants to segment customers based on buying behavior.

Without predefined labels, the AI might group customers into:

- Budget buyers

- Premium shoppers

- Seasonal shoppers

- High-frequency buyers

The business can then use these clusters for targeted marketing.

Unsupervised learning is often used for:

- Customer segmentation

- Market research

- Anomaly detection

- Data compression

It is powerful when labeled data is expensive or unavailable.

5.3 Reinforcement Learning

Reinforcement learning (RL) is based on reward-based learning.

Instead of being given labeled answers, the model learns by interacting with an environment and receiving feedback in the form of rewards or penalties.

It’s similar to learning through trial and error.

Reward-Based Learning

The training process involves:

- An agent (the AI system)

- An environment (where actions happen)

- Actions (choices the agent makes)

- Rewards (positive or negative feedback)

The agent:

- Takes an action.

- Receives feedback.

- Adjusts its strategy to maximize rewards over time.

The goal is to learn the best long-term strategy.

Used in Robotics & Games

Reinforcement learning is widely used in:

- Robotics (movement control, object manipulation)

- Autonomous vehicles

- Game-playing AI

- Resource optimization systems

For example:

An AI trained to play chess receives:

- Positive reward for winning

- Negative reward for losing

Over thousands or millions of games, it improves its strategy.

In robotics:

- Correct movements receive rewards.

- Incorrect movements receive penalties.

The system gradually learns optimal behavior.

Reinforcement learning is powerful but often requires:

- Simulated environments

- Significant computational resources

- Extensive experimentation

5.4 Self-Supervised Learning

Self-supervised learning is a more advanced training method that has become extremely important in modern AI—especially in large language models.

It combines ideas from supervised and unsupervised learning.

How It Works

Instead of relying on manually labeled data, the model creates its own labels from the data itself.

For example, in text training:

- The model reads a sentence.

- Some words are hidden.

- The model predicts the missing words.

Because the correct word already exists in the text, the data labels itself.

This allows training on massive datasets without human labeling.

Used in Large Language Models

Self-supervised learning is used in large language models like:

- ChatGPT

- OpenAI’s GPT models

- Many modern AI research systems

These models are trained on vast text datasets where:

- The model predicts the next word in a sentence.

- Errors are measured automatically.

- Billions of parameters adjust over time.

Self-supervised learning enables:

- Language understanding

- Context awareness

- Text generation

- Code generation

It has dramatically reduced the need for manual labeling at scale.

Comparing the Training Type

| Training Type | Uses Labels? | Learning Method | Data Requirement | Common Algorithms / Techniques | Typical Use Cases | Real-World Examples | Advantages | Challenges |

|---|---|---|---|---|---|---|---|---|

| Supervised Learning | Yes (Human-labeled data) | Learns from correct input-output examples | Requires large labeled datasets | Linear Regression, Logistic Regression, Decision Trees, Random Forest, Neural Networks | Prediction, classification, forecasting | Spam detection, medical diagnosis, fraud detection | High accuracy, clear evaluation metrics, predictable results | Labeling data is expensive and time-consuming |

| Unsupervised Learning | No | Finds hidden patterns and relationships automatically | Works with unlabeled datasets | Clustering (K-Means), PCA, Autoencoders | Customer segmentation, anomaly detection, recommendation grouping | Market segmentation, behavior analysis | No labeling needed, discovers unknown insights | Harder to evaluate correctness |

| Reinforcement Learning | Reward-based feedback | Learns through trial and error interactions | Requires simulated or interactive environments | Q-Learning, Policy Gradients, Deep Q Networks | Decision-making, robotics, optimization | Autonomous driving, robotics control, game AI | Learns complex strategies, adaptive behavior | Computationally expensive, slow training |

| Self-Supervised Learning | Auto-generated labels | Model creates learning signals from raw data | Extremely large datasets | Transformer Models, Contrastive Learning, Masked Prediction | Language models, vision models, multimodal AI | ChatGPT, image understanding systems | Scales efficiently, reduces manual labeling | Requires massive computing resources |

| Semi-Supervised Learning | Partially | Combines small labeled data with large unlabeled data | Moderate labeled + large unlabeled datasets | Pseudo-labeling, Consistency Training | Speech recognition, image classification | Medical imaging, document analysis | Reduces labeling cost while maintaining accuracy | Sensitive to noisy labels |

| Transfer Learning | Usually Yes (fine-tuning) | Reuses knowledge from pre-trained models | Small dataset needed after pretraining | Fine-tuning pretrained neural networks | Specialized AI applications | Custom chatbots, niche image recognition | Faster training, lower cost, strong performance | Depends on quality of base model |

| Online Learning | Optional | Learns continuously from new incoming data | Streaming or real-time data | Incremental learning algorithms | Real-time recommendation systems | Stock prediction, fraud monitoring | Adapts instantly to new data | Risk of model drift or instability |

Each training type serves a different purpose. Modern AI systems often combine multiple methods to achieve better results.

Understanding these training types helps you see how AI systems learn, from detecting spam to powering advanced conversational models.

6. What Is Deep Learning Training?

Deep learning training is one of the most powerful advancements in artificial intelligence. Deep learning models can understand highly complex patterns such as human language, images, speech, and video while the traditional machine learning models learn from structured data and simpler relationships.

Deep learning powers many modern AI breakthroughs. This includes voice assistants, image recognition systems, and conversational AI tools.

Let’s break it down step by step in simple terms.

Neural Networks Explained Simply

Deep learning is based on artificial neural networks, which are inspired by how the human brain processes information.

A neural network consists of layers of connected nodes called neurons:

- Input Layer — receives raw data (text, images, numbers)

- Hidden Layers — analyze patterns and relationships

- Output Layer — produces the final prediction

Each neuron performs small mathematical calculations and passes information forward through the network.

Example:

If the task is image recognition:

- Input layer receives pixel data.

- Hidden layers detect edges, shapes, textures, and objects.

- Output layer predicts: “This is a dog.”

The term deep learning comes from having many hidden layers, allowing the model to learn increasingly abstract features.

Early layers learn simple patterns → deeper layers learn complex understanding.

Backpropagation

Backpropagation is the core learning mechanism of deep neural networks.

After the model makes a prediction, it needs to know:

👉 How wrong was I?

👉 How should I improve?

Backpropagation works by sending the error backward through the network.

The process:

- Model makes a prediction.

- The prediction is compared with the correct answer.

- The system calculates the error (loss).

- Error signals travel backward through each layer.

- Each neuron adjusts its weights slightly.

This backward adjustment allows every layer to improve its contribution to the final prediction.

Without backpropagation, deep learning models would not be able to learn efficiently.

Gradient Descent

Gradient descent is the optimization method used to reduce errors during training.

You can imagine training like trying to reach the lowest point in a valley while blindfolded.

- The height represents prediction error.

- The goal is to move downhill until error becomes minimal.

Gradient descent helps the model take small steps toward better accuracy by updating parameters in the direction that reduces loss.

Key ideas:

- Small adjustments prevent instability.

- Repeated iterations improve performance.

- Training continues until improvement slows.

Variants like:

- Stochastic Gradient Descent (SGD)

- Mini-batch Gradient Descent

- Adam Optimizer

are commonly used to make training faster and more stable.

Why GPUs Are Needed

Deep learning training requires enormous computational power.

Unlike traditional models, neural networks may contain:

- Millions or even billions of parameters

- Massive datasets

- Complex matrix calculations

A standard CPU processes tasks sequentially, but deep learning involves many calculations happening simultaneously.

GPUs (Graphics Processing Units) are ideal because they:

- Perform parallel computations

- Handle matrix operations efficiently

- Dramatically reduce training time

Training that might take months on a CPU can sometimes be completed in days or hours using GPUs.

Modern AI training often uses:

- GPU clusters

- Specialized AI accelerators

- Cloud-based computing infrastructure

This computational demand is one reason large-scale AI development requires significant investment.

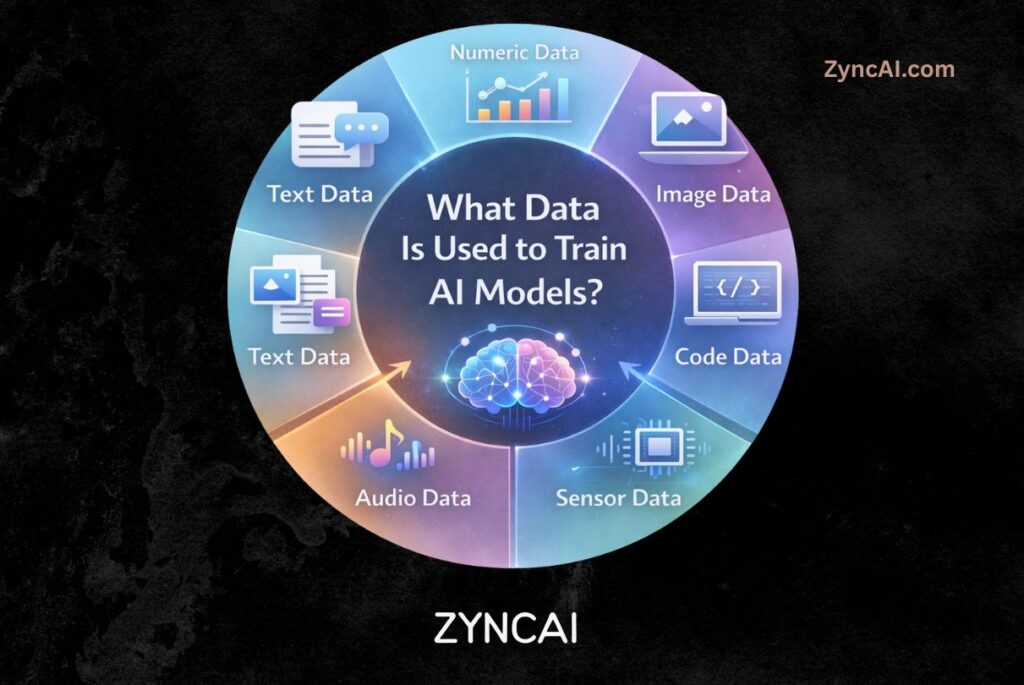

7. What Data Is Used to Train AI Models?

AI models learn entirely from data. The type, quality, and diversity of data directly determine how intelligent, accurate, and reliable an AI system becomes.

Different AI applications require different types of training data. Some models learn language, others understand images, while business AI systems analyze numerical datasets.

Let’s explore the main categories of data used in AI training.

- Text Data

- Images

- Audio

- Video

- Structured Business Data

7.1 Text Data

Text data is one of the most widely used data types in modern AI.

It includes:

- Books

- Articles

- Websites

- Emails

- Chat conversations

- Code repositories

- Customer support messages

Text datasets allow AI systems to learn:

- Grammar and language structure

- Context understanding

- Question answering

- Writing styles and tone

Large language models are trained using massive collections of text to understand how humans communicate.

Common applications:

- Chatbots

- Content generation

- Translation systems

- Search engines

7.2 Images

Image data helps AI models learn visual recognition.

Examples include:

- Photos

- Medical scans

- Product images

- Satellite imagery

- Security camera footage

During training, models analyze patterns such as:

- Shapes

- Colors

- Edges

- Objects

- Facial features

Image-based AI powers:

- Facial recognition

- Autonomous driving perception

- Medical image diagnosis

- Visual search systems

Each image is often labeled to help the model associate visuals with correct categories.

7.3 Audio

Audio data enables AI systems to understand sound and speech.

Examples:

- Human speech recordings

- Podcasts

- Music

- Environmental sounds

- Call center conversations

AI models trained on audio learn to:

- Recognize speech

- Convert speech to text

- Identify speakers

- Detect emotions or tone

Applications include:

- Voice assistants

- Speech transcription tools

- Voice biometrics

- Smart home devices

7.4 Video

Video combines multiple data types simultaneously:

- Images (frames)

- Audio

- Motion patterns

- Temporal context

Training on video allows AI to understand actions and events over time.

Examples:

- Surveillance systems detecting suspicious activity

- Sports analytics tracking player movement

- Autonomous vehicles interpreting traffic scenarios

- Content moderation systems

Video training is computationally intensive because models must process thousands of frames per clip.

7.5 Structured Business Data

Not all AI training uses media content. Many enterprise AI systems rely on structured business data.

Examples:

- Sales transactions

- Customer databases

- Financial records

- Inventory logs

- Website analytics

Structured data usually appears in tables with rows and columns, making it ideal for traditional machine learning models.

Business applications include:

- Demand forecasting

- Fraud detection

- Customer segmentation

- Risk analysis

- Marketing optimization

This type of data drives most AI adoption in organizations today.

Example Platforms Providing Training Data

Large AI systems learn from publicly available and licensed data sources across the internet.

1) YouTube

Provides massive video and audio datasets useful for:

- Speech recognition

- Video understanding

- Multimodal learning

2) Twitter

Offers real-time conversational text useful for:

- Language trends

- Sentiment analysis

- Social behavior modeling

3) Wikipedia

A high-quality structured knowledge source often used for:

- Factual learning

- Language modeling

- Knowledge grounding

Why Data Diversity Matters

High-performing AI models require:

- Diverse datasets

- Multiple languages

- Different demographics

- Various environments and contexts

More diverse training data leads to:

- Better generalization

- Reduced bias

- Improved real-world performance

In AI, data quality often matters more than algorithm complexity.

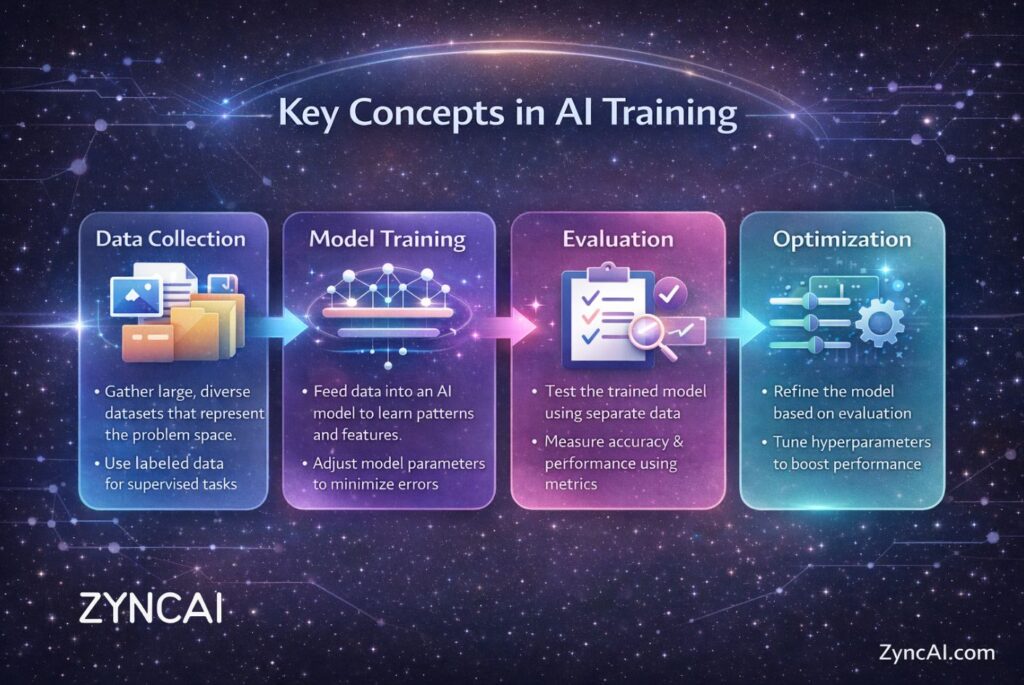

8. Key Concepts in AI Training

When discussing AI training, several technical terms appear frequently. Understanding these concepts helps you interpret how models learn and why training decisions matter.

8.1 Epoch

An epoch represents one complete pass through the entire training dataset.

Example:

If a dataset contains 10,000 images:

- After the model sees all 10,000 images once → 1 epoch

Training usually requires multiple epochs because the model improves gradually with repeated exposure.

Too few epochs:

- Model learns too little.

Too many epochs:

- Model may memorize data instead of learning patterns.

Finding the right number of epochs is crucial for good performance.

8.2 Batch Size

Instead of processing the entire dataset at once, training divides data into smaller groups called batches.

Batch size refers to how many samples are processed before updating the model.

Example:

- Dataset: 10,000 samples

- Batch size: 100

- Model updates after every 100 samples

Small batch sizes:

- More stable learning

- Slower training

Large batch sizes:

- Faster training

- Require more memory

Choosing the right batch size balances efficiency and accuracy.

8.3 Learning Rate

The learning rate controls how big each learning step is during training.

Think of it as the speed at which the model learns.

- High learning rate → Faster learning but risk of instability

- Low learning rate → Stable learning but slower progress

If the learning rate is too high:

- The model may never converge.

If too low:

- Training may take excessively long.

Tuning the learning rate is one of the most important tasks in AI model optimization.

8.4 Loss Function

A loss function measures how wrong the model’s prediction is.

It provides a numerical score representing prediction error.

Examples:

- Mean Squared Error (MSE) for numerical predictions

- Cross-Entropy Loss for classification tasks

Training aims to minimize the loss value.

Lower loss generally means:

- Better predictions

- Improved model performance

The loss function acts like a compass guiding the model toward better accuracy.

8.5 Overfitting vs Underfitting

These are two common problems during training.

Overfitting

Occurs when the model memorizes training data instead of learning general patterns.

Symptoms:

- Excellent performance on training data

- Poor performance on new data

Cause:

- Too complex model

- Too many training epochs

- Limited dataset diversity

Underfitting

Occurs when the model fails to learn meaningful patterns.

Symptoms:

- Poor performance on both training and testing data

Cause:

- Model too simple

- Insufficient training

- Poor feature representation

The goal is to find the balance between these two extremes.

8.6 Model Generalization

Generalization refers to a model’s ability to perform well on new, unseen data.

A well-trained model should:

- Handle real-world scenarios

- Adapt to new inputs

- Avoid memorization

Strong generalization is the true measure of successful AI training.

Techniques that improve generalization include:

- Diverse datasets

- Regularization methods

- Proper validation testing

- Data augmentation

9. How Long Does It Take to Train an AI Model?

One of the most common questions beginners and businesses ask is: How long does AI training actually take?

The answer depends on several factors:

- Model complexity

- Dataset size

- Algorithm type

- Hardware power

- Required accuracy level

AI training time can range from a few minutes to several months.

Small Models: Minutes to Hours

Small machine learning models are often used for business analytics or beginner projects.

Examples:

- Spam filters

- Sales forecasting models

- Customer churn prediction

- Recommendation prototypes

Typical characteristics:

- Thousands to millions of data points

- Simple algorithms (linear regression, decision trees)

- Standard CPU or single GPU training

Training time:

👉 Minutes to a few hours

These models are commonly trained on personal computers or basic cloud instances.

Medium Models: Days

Medium-scale models involve more complex data or deeper neural networks.

Examples:

- Image classification systems

- Speech recognition models

- Advanced recommendation engines

- NLP models for specific industries

Characteristics:

- Millions of training samples

- Deep learning architectures

- GPU acceleration required

Training time:

👉 Several hours to multiple days

Organizations typically use dedicated GPU machines or cloud platforms at this stage.

Large Language Models: Weeks or Months

Large-scale AI models represent the most advanced category.

Examples include:

- Large language models

- Multimodal AI systems

- Advanced generative models

Models like ChatGPT require:

- Massive datasets (billions of tokens)

- Thousands of GPUs running simultaneously

- Distributed training across data centers

Training time:

👉 Several weeks to months

These models undergo multiple phases:

- Pre-training

- Fine-tuning

- Safety optimization

- Evaluation

Large-scale training is one of the most computationally intensive tasks in modern technology.

Hardware Requirements (GPU Clusters & Cloud Computing)

Training speed is heavily influenced by hardware.

Common hardware used in AI training:

CPUs

- Suitable for small models

- Limited parallel computation

GPUs

- Designed for parallel mathematical operations

- Essential for deep learning

GPU Clusters

- Hundreds or thousands of GPUs connected together

- Used for large AI systems

Cloud Computing

Companies rely on cloud providers such as:

- Microsoft Azure

- Amazon Web Services

- Google Cloud

Cloud platforms allow organizations to scale computing power instantly without owning physical infrastructure.

10. Cost of Training AI Models

Training AI models can range from extremely affordable to extraordinarily expensive depending on scale.

Understanding the cost components helps businesses plan AI adoption effectively.

10.1 Cloud Costs

Cloud computing is often the largest expense.

Costs depend on:

- GPU usage hours

- Storage requirements

- Data transfer

- Distributed computing infrastructure

Typical examples:

- Small project: $10–$100

- Startup-scale model: Thousands of dollars

- Enterprise AI training: Millions of dollars

GPU instances are expensive because they provide specialized high-performance computation.

10.2 Data Labeling Cost

Many AI systems require labeled datasets.

Human annotators may need to:

- Label images

- Categorize text

- Transcribe speech

- Verify outputs

Labeling large datasets can become one of the biggest hidden expenses.

Challenges include:

- Time consumption

- Human error

- Scaling annotation teams

This is why modern AI increasingly uses self-supervised learning to reduce labeling costs.

10.3 Engineering Cost

AI training also requires skilled professionals:

- Machine learning engineers

- Data scientists

- AI researchers

- Infrastructure engineers

Costs include:

- Model design

- Data preparation

- Experimentation

- Optimization and testing

Highly skilled AI talent significantly contributes to overall project expenses.

Example of Large-Scale Training Investments

Training cutting-edge AI systems can cost enormous amounts.

Large organizations invest heavily in:

- Massive GPU clusters

- Data acquisition

- Research and experimentation

Companies like OpenAI, Google, and Meta Platforms spend hundreds of millions of dollars developing advanced AI models.

These investments enable breakthroughs in generative AI, automation, and intelligent systems.

11. Challenges in Training AI Models

Despite its power, AI training comes with significant challenges. Understanding these limitations helps organizations build more responsible and effective AI systems.

11.1 Bias in Data

AI learns directly from data. If training data contains bias, the model may inherit those biases.

Examples:

- Underrepresentation of certain groups

- Historical societal bias in datasets

- Cultural or language imbalance

Consequences:

- Unfair predictions

- Ethical concerns

- Reduced trust in AI systems

Responsible data collection and evaluation are essential to reduce bias.

11.2 Overfitting

Overfitting occurs when a model memorizes training data instead of learning general patterns.

Symptoms:

- Excellent training accuracy

- Poor real-world performance

Solutions:

- More diverse data

- Regularization techniques

- Proper validation testing

11.3 Data Scarcity

High-quality data is often difficult to obtain.

Problems include:

- Limited domain-specific datasets

- Privacy restrictions

- Expensive labeling processes

Small datasets can limit model performance and generalization ability.

11.4 Privacy Concerns

AI training may involve sensitive information such as:

- Medical records

- Financial data

- Personal conversations

Organizations must follow privacy regulations and ethical guidelines when handling data.

Techniques like anonymization and federated learning help reduce privacy risks.

11.5 Energy Consumption

Large AI training processes consume significant electricity.

Training massive models requires:

- Large data centers

- Continuous GPU operation

- Advanced cooling systems

This raises environmental concerns and pushes the industry toward:

- Efficient algorithms

- Smaller models

- Sustainable AI infrastructure

12. What Happens After Training?

Training is not the final step. Once a model finishes learning, it enters the post-training lifecycle, where it becomes a usable real-world system.

Step 01 – Validation

Before deployment, the model undergoes validation.

Goals:

- Confirm accuracy on unseen data

- Detect bias or instability

- Ensure reliability

Validation ensures the model learned genuine patterns rather than memorizing training examples.

Step 02 – Deployment

Deployment means integrating the trained model into real applications.

Examples:

- Chatbot integrated into a website

- Recommendation engine inside an app

- Fraud detection system running in banking software

Deployment converts AI research into practical business value.

Step 03 – Monitoring

AI models must be monitored continuously after deployment.

Why?

Real-world data changes over time.

Monitoring tracks:

- Prediction accuracy

- System performance

- Unexpected behavior

- Model drift

Without monitoring, model performance can degrade.

Step 04 – Fine-Tuning

Fine-tuning improves a pre-trained model using specialized data.

Examples:

- Training a general language model for legal writing

- Customizing a chatbot for customer support

- Adapting image recognition for medical analysis

Fine-tuning is faster and cheaper than training a model from scratch.

Step 05 – Continuous Learning

Modern AI systems are rarely static.

Continuous learning allows models to:

- Learn from new data

- Adapt to evolving environments

- Improve user experience over time

This creates AI systems that grow smarter as they interact with the real world.

13. Real-World Examples of Large Language Model Training

Below are real-world examples showing how organizations apply the large language model (LLM) training pipeline in practice, which includes pre-training, fine-tuning, and human feedback optimization to create powerful AI systems.

1. GitHub Copilot : AI Programming Companion

Built through collaboration between GitHub and OpenAI.

Training stages:

- Pre-training on large public code repositories

- Fine-tuning using developer workflows

- Human evaluation from programmers

Result:

An AI assistant that writes code, suggests fixes, and accelerates software development.

2. Google Gemini : Multimodal AI System

Developed by Google.

Training includes:

- Text, image, audio, and video datasets

- Large-scale transformer training

- Continuous reasoning optimization

Result:

AI powering search, productivity tools, and intelligent assistants.

3. ChatGPT : Conversational AI Assistant

Developed by OpenAI, ChatGPT follows the full LLM training lifecycle:

- Massive pre-training on diverse text datasets

- Instruction fine-tuning for conversations

- Reinforcement Learning from Human Feedback (RLHF)

- Continuous post-deployment improvements

Result:

A widely used conversational AI helping with writing, coding, education, research, and productivity.

4. Claude : Safety-Focused Language Model

Created by Anthropic.

Training emphasizes:

- Constitutional AI principles

- Human preference alignment

- Safety-focused reinforcement learning

Result:

A conversational AI optimized for reliability, reasoning, and safer responses.

5. Meta AI : Open Research Language Models

Developed by Meta Platforms.

Training approach:

- Massive open datasets

- Distributed GPU clusters

- Open research experimentation

Result:

AI models supporting researchers, developers, and open innovation ecosystems.

6. Microsoft Copilot : Workplace Productivity AI

Built by Microsoft.

Training pipeline:

- Pre-training on large language datasets

- Fine-tuning for enterprise workflows

- Integration with productivity software

Result:

AI assistance across documents, spreadsheets, meetings, and emails.

7. Amazon Alexa : Voice AI Assistant

Developed by Amazon.

Training includes:

- Speech datasets

- Conversational dialogue learning

- Continuous real-world feedback

Result:

Voice interaction AI capable of natural language understanding and smart home control.

8. Siri : Mobile AI Assistant

Created by Apple Inc..

Training stages:

- Speech recognition pre-training

- Intent classification models

- On-device learning optimization

Result:

AI assistant embedded into millions of smartphones worldwide.

9. IBM Watson : Enterprise AI System

Developed by IBM.

Training approach:

- Domain-specific datasets (healthcare, finance, business)

- Expert-guided fine-tuning

- Knowledge reasoning systems

Result:

Enterprise AI used for analytics, healthcare insights, and decision support.

10. Bard : Conversational Search AI

Built by Google as an early conversational interface powered by LLM technology.

Training involves:

- Web-scale language data

- Search understanding optimization

- Human feedback alignment

Result:

Enhanced conversational search experiences.

11. Perplexity AI : AI Answer Engine

Developed by Perplexity AI.

Training pipeline:

- Large language pre-training

- Retrieval-augmented generation

- Real-time information grounding

Result:

AI search delivering summarized, cited answers.

12. Character.AI : Personality-Driven AI

Created by Character Technologies.

Training focuses on:

- Conversational personality modeling

- Dialogue optimization

- Human interaction feedback loops

Result:

AI characters capable of roleplay, storytelling, and immersive conversations.

13. Midjourney : Multimodal Generative AI

Although image-focused, its system integrates language understanding models.

Training includes:

- Text-image paired datasets

- Prompt understanding learning

- Iterative user feedback refinement

Result:

AI capable of generating high-quality visual content from text prompts.

14. Stable Diffusion : Open Generative Model

Developed by Stability AI.

Training method:

- Large multimodal datasets

- Open-source model experimentation

- Community fine-tuning contributions

Result:

Accessible generative AI widely adopted by developers and creators.

15. Duolingo Max : AI Education Assistant

Powered by LLM technology within Duolingo.

Training includes:

- Language datasets

- Educational dialogue examples

- Pedagogical fine-tuning

Result:

Personalized language tutoring using conversational AI.

Below are real-world examples showing how organizations apply the large language model (LLM) training pipeline in practice — combining pre-training, fine-tuning, and human feedback optimization to create powerful AI systems.

FAQs

Q1. What does training a model in AI mean?

A1. Training a model means teaching an AI system to learn patterns from data so it can make predictions, decisions, or generate outputs without being explicitly programmed for every situation.

Q2. Why is training important in artificial intelligence?

A2. Training transforms a basic algorithm into an intelligent system by allowing it to learn from examples and improve accuracy over time.

Q3. What happens during AI model training?

A3. The model receives data, makes predictions, measures errors, adjusts internal parameters, and repeats this process until performance improves.

Q4. What is an AI model?

A4. An AI model is a mathematical system that learns patterns from data and produces outputs such as predictions, classifications, or generated content.

Q5. What types of data are used to train AI models?

A5. AI models can be trained using text, images, audio, video, and structured business data like spreadsheets and databases.

Q6. What is supervised learning?

A6. Supervised learning trains models using labeled data where each input has a correct answer.

Q7. What is unsupervised learning?

A7. Unsupervised learning uses unlabeled data and allows the model to discover patterns or groupings automatically.

Q8. What is reinforcement learning?

A8. Reinforcement learning trains AI through rewards and penalties based on actions taken in an environment.

Q9. What is self-supervised learning?

A9. Self-supervised learning allows models to create their own labels from raw data, enabling large-scale training without manual annotation.

Q10. How long does it take to train an AI model?

A10. Training can take minutes for small models, days for medium models, and weeks or months for large language models.

Q11. What is deep learning training?

A11. Deep learning training uses neural networks with multiple layers to learn complex patterns such as language understanding or image recognition.

Q12. What is a neural network?

A12. A neural network is a system of interconnected layers that process information similarly to neurons in the human brain.

Q13. What is backpropagation?

A13. Backpropagation is a training process in neural networks where prediction errors are sent backward through the model to adjust internal weights and improve accuracy.

Q14. What is gradient descent?

A14. Gradient descent is an optimization algorithm that helps reduce a model’s error by gradually adjusting its parameters in the direction that minimizes the loss function.

Q15. Why do AI models need GPUs?

A15. GPUs are used because they can perform many mathematical calculations simultaneously, making deep learning training much faster than using standard CPUs.

Q16. What is an epoch in AI training?

A16. An epoch represents one complete pass of the entire training dataset through the AI model during the learning process.

Q17. What is batch size?

A17. Batch size refers to the number of data samples processed before the model updates its parameters during training.

Q18. What is a learning rate?

A18. The learning rate controls how large or small the adjustments are when the model updates its internal parameters during training.

Q19. What is a loss function?

A19. A loss function measures how far the model’s prediction is from the correct answer and guides the model toward better performance.

Q20. What is overfitting in AI?

A20. Overfitting occurs when a model memorizes training data instead of learning general patterns, causing poor performance on new data.

Q21. What is underfitting?

A21. Underfitting happens when a model fails to learn meaningful relationships in the data due to insufficient training or an overly simple model.

Q22. What is model generalization?

A22. Model generalization is the ability of an AI system to perform well on new, unseen data rather than only the training dataset.

Q23. What happens after AI training is complete?

A23. After training, the model goes through validation, deployment, monitoring, fine-tuning, and continuous improvement stages.

Q24. What is fine-tuning in AI?

A24. Fine-tuning is the process of improving a pre-trained model using smaller, specialized datasets to perform specific tasks more accurately.

Q25. What is RLHF (Reinforcement Learning from Human Feedback)?

A25. RLHF is a training method where human feedback is used to rank model outputs, helping AI systems learn preferred and safer responses.

Q26. How much data is needed to train an AI model?

A26. The amount of data required varies widely, ranging from thousands of samples for simple models to billions of examples for large language models.

Q27. Can beginners train AI models?

A27. Yes, beginners can train AI models using beginner-friendly tools, online platforms, and open-source machine learning frameworks.

Q28. Can AI models learn without labeled data?

A28. Yes, techniques like unsupervised learning and self-supervised learning allow models to learn patterns without manually labeled data.

Q29. What affects AI training speed?

A29. Training speed depends on dataset size, model complexity, hardware performance, algorithm efficiency, and optimization settings.

Q30. How expensive is AI model training?

A30. Training costs can range from a few dollars for small experiments to millions of dollars for large-scale enterprise AI systems.

Q31. What are common challenges in training AI models?

A31. Common challenges include biased datasets, insufficient data, privacy issues, high computational costs, and energy consumption.

Q32. What is data preprocessing?

A32. Data preprocessing involves cleaning, organizing, and transforming raw data into a suitable format for effective model training.

Q33. What is model deployment?

A33. Model deployment is the process of integrating a trained AI model into real-world applications such as websites, apps, or business systems.

Q34. Do AI models keep learning after deployment?

A34. Many AI systems continue improving after deployment through retraining, feedback loops, and continuous learning pipelines.

Q35. What industries use AI model training?

A35. Industries including healthcare, finance, education, e-commerce, marketing, transportation, and entertainment rely heavily on trained AI models.

Q36. What is transfer learning?

A36. Transfer learning allows a model trained on one task to be reused and adapted for another related task, reducing training time and data requirements.

Q37. Are large language models trained differently from traditional AI?

A37. Yes, large language models use massive datasets, transformer architectures, self-supervised learning, and human feedback alignment techniques.

Q38. Can AI training be done on a personal computer?

A38. Small and beginner-level models can be trained on personal computers, while large models usually require cloud computing or GPU clusters.

Q39. Is AI training a one-time process?

A39. No, AI models typically require periodic retraining and updates to maintain performance as data and environments change.

Q40. What is the future of AI model training?

A40. The future includes more efficient training methods, smaller yet powerful models, multimodal learning systems, and environmentally sustainable AI development.